Summary

Vision

The Institute of Digital InnovAtion (IDIA) is George Mason University’s commitment to inclusively shaping the future of our digital society, promoting well-being, security, and prosperity.

IDIA is a sector leader that provides transdisciplinary research, innovation, and next-generation workforce development strategy across the university for scaled, sustainable growth in digital innovation, leverages synergies, strengthening the innovation ecosystem and growing capacities for transdisciplinary research, scholarship, and innovation, supports placemaking, instigating and building research and innovation communities around places and activating and supporting a culture of transdisciplinary research and shared research infrastructure, and amplifies the visibility and awareness of George Mason University as a globally recognized leader for its world-class research, innovation, and economic impact activities, as well as its next-generation students and scholars.

OnAir Post: IDIA – Institute for Digital Innovation

News

The transdisciplinary team examines how cultural values and institutional polices shape AI infrastructures in national and global contexts.

George Mason University’s AI Strategies team gather at the AI & Tech Policy Summer Institute: Antonios Anastasopoulous, Manpriya Dua, Jesse L. Kirkpatrick, Caroline Wesson, Vasilii Nosov, Amarda Shehu, Michael Hunzeker, and J.P. Singh (Not pictured: William “Webby” Applegate)

Just days following Mason’s spring commencement ceremonies, a cohort of 20 selected AI and Tech fellows gathered at the Mason Square campus for George Mason University’s AI Strategies first AI & Tech Policy Summer Institute. Apart from AI Strategies, the three-day event was co-sponsored by Mason’s Institute for Philosophy and Public Policy, the Schar School of Policy and Government, the Institute for Digital Innovation, and the Center for Advancing Human-Machine Partnership. The event brought together scholars, industry experts, government officials, and civil society activists from multiple academic disciplines, backgrounds, and research interests.

The cohort convened to introduce master’s and doctoral students in the social sciences, humanities, and select professional schools to the fundamental engineering concepts about how AI works, policy and regulatory frameworks that are evolving to govern AI, debates on AI ethics, and issues surrounding security, economic, and human rights concerns from local to global levels.

AI Strategies is funded by a three-year, $1.39 million grant to study the economic and cultural determinants for global artificial intelligence infrastructures—and describe their implications for national and international security. The grant was awarded by the Department of Defense’s esteemed Minerva Research Initiative, a joint program of the Office of Basic Research and the Office of Policy that supports social science research focused on expanding basic understanding of security.

Researchers from the College of Humanities and Social Sciences’ Institute for Philosophy and Public Policy (i3p) have played a key role in the project from the pre-proposal stage to the present, providing insight on the ethical, social, and policy implications of emerging technologies. Research Associate Professor of Philosophy and i3p Acting Director Jesse Kirkpatrick, a member of the AI Strategies team, presented at the event. His presentation, “Responsible Innovation and National Security,” addressed existing efforts, challenges, and opportunities in responsible AI, and drew on his involvement in responsible AI research, policy, and practice across such sectors as academia, industry, and government.

“It’s no secret that there is a vital need for transdisciplinary mentorship and training in AI for our graduate students. What may be less obvious is that this must occur across disciplines, Kirkpatrick said. “By engaging nearly 30 speakers and faculty, our 20 AI & Tech fellows got just that—a broad and deep look at the cutting-edge of AI, inclusive of numerous perspectives.”

Kirkpatrick said that from the composition of the research team to the design and structure of the project and its research outputs, the people, process, and products have been thoroughly transdisciplinary. “This is a testimony to the team’s leadership, the support we have from our respective academic units, schools, and colleges, and the wonderful constellation of research centers and institutes,” he said.

J.P. Singh, Distinguished University Professor at the Schar School of Policy and Government, leads Mason’s transdisciplinary AI Strategies team. Following the event, Singh commented, “We have received positive feedback in superlatives for the transdisciplinary training our masters and doctoral students received in AI, including engineering, social science, and policy frameworks,” he said, adding, “I work with an excellent and creative transdisciplinary team. Mason in general is such an exciting and innovative university.”

At the conclusion of the event, the cohort of fellows will participate in a year-long fellowship through Mason’s Center for Advancing Human Machine Partnership, a faculty-driven transdisciplinary research center.

Just what is AI?

This was one of the first questions posed by Missy Cummings, director of George Mason University’s Autonomy and Robotics Center, at the Future of AI roundtable in June.

“At the end of the day, AI can be a tool to help you in your job, but you need to understand both its strengths and weaknesses,” Cummings said. “That’s why Mason will be offering a certificate and master’s degree focused on responsible AI, so that people across industry and government can learn how to manage the risks while promoting the benefits of AI.”

The invite-only roundtable, hosted by the College of Engineering and Computing, explored the issues, challenges, and solutions to think about as AI technologies rapidly evolve and change almost as soon as they are introduced.

Cummings led the roundtable and highlighted AI areas like ChatGPT and autonomous driving systems that lack human reasoning skills. When human behavior, environment, and AI blind spots intersect, the resulting uncertainty contributes to fundamental limitations.

“As humans, we do a great job of successfully filling in the blanks when there is imperfect information,” said Cummings. “If I quickly flash a picture of a stop sign, your brain automatically recognizes what it is, even if it’s not a clear image. But it’s arguable whether the vision system on a self -driving car can do the same.”

She added that automation can fall apart at a critical threshold, such as when a car is supposed to stop at a stop sign that’s visible to the human eye, but maybe overlooked by an AI vision system.

“As humans, we have much more experience driving cars,” she said. “Self-driving cars are still a new technology and while simulation testing can help identify problems, real-world testing for such non-deterministic systems that never reason the same way twice is critical.”

One of her favorite uses of ChatGPT is for her students to use it for grammar and spell checking when they write papers, but that’s the extent.

“ChatGPT cannot reason under uncertainty. It does not think. It does not know. It can approximate human knowledge, but there is no actual thinking or knowledge,” said Cummings. “ChatGPT goes after the most probable image, or the most probable grouping of words.”

In general, large language models like ChatGPT will use what the average person is saying on the internet. This means it could pick up extremist views, if it is currently something trending or popular online.

It can also be a concern when it comes to diversity, she said.

“If a company uses ChatGPT to write their mission statement, in another five years, everyone’s mission statement will be the same,” Cummings said. “Creative thoughts and authenticity are lost. It’s important to understand how far we push these models, and what the long-term ramifications could be.”

Roundtable attendees from local universities and tech companies left with a greater understanding of how AI can impact everyday life, in more ways than one.

Mason News – February 9, 2023

An Interview with Dr. Farrokh Alemi

Faculty proposes co-teaching course aided by artificial intelligence.

Dr. Farrokh Alemi is a professor in the Department of Health Administration and Policy at the George Mason College of Public Health. Dr. Alemi’s research expertise includes the use of data mining, natural language processing, and artificial intelligence in health services research.

Could faculty members really replace themselves with artificial intelligence?

I am already working on using ChatGPT and artificial intelligence (AI) in my courses. For example, HAP 823 spring session uses the public version of ChatGPT to explain concepts to students. In fact, I’ve also proposed to “replace” myself with AI in HAP 725 Statistical Process Control. Of course, AI could not really completely replace me for student interaction, grading exams, discussion groups, etc., but there are many steps I could take to automate this course fully. Here is an example of how ChatGPT can help: instead of me teaching how to code in a control chart in python, the public version of ChatGPT can draft the code and show students how it is done. It is my goal to make HAP 725 taught entirely by AI and for me to have “a human-in-the-loop function,” backing up the AI when it fails to deliver. What better way to teach about the potential of artificial intelligence?

What does it mean to have a human-in-the-loop function?

Human-in-the-loop function means that the faculty will supervise the messages to the students, prior to the machine sending it. Students can also at any time ask for a meeting with the faculty.

If we can automate HAP 725, why would we need faculty in the classroom at all? What value does faculty provide if AI can do it for us?

Faculty still decide the content of the course and still remain as the person to respond to students. Automation allows one faculty to address a larger number of students. The role of the faculty is still critically important to teaching critical thinking skills and helping students develop skills in application and synthesis, especially innovative applications. This challenges faculty in higher education to move beyond learning mastery—a function AI may be able to support. Innovation and critical thinking…that is the domain of faculty and their teaching strategies and engagement with students.

Would ChatGPT be helpful in your current AI-powered decision aid for selecting antidepressants?

We are also working on using ChatGPT in our research. For example, we are working to improve feedback to people who use our decision aid on antidepressants (http://MeAgainMeds.com). Today, when ChatGPT answers a question on antidepressants, it gives very general advice. We need to make it very specific to be useful. Google Brain has made ChatGPT more specific by training it further. The specific version of Google Brain can pass the U.S. Medical Licensing Exam. Thus, it has more knowledge and is more specific to the exam. When it comes to antidepressant selection, none of the current versions of ChatGPT are sufficiently detailed to address the needs of patients. The system we are planning will be trained to do so.

What would it take to make ChatGPT more specific to serve your research purposes?

Unfortunately, the use of ChatGPT in our situation relies on their private engine, which is expensive. We expect simply training ChatGPT to understand formulas for diagnosis of COIVD will cost us a minimum of $5,000. It could cost significantly more, upward of $50,000. The problem is that we need to generate all combinations of variables in the model, so a simple regression model with ten variables leads to 2^10 possible text-based scenarios; a model with 20 variables leads to 2^20 combinations, and so on.

We can use LASSO regression to reduce the number of variables, but still, there are a lot of training combinations necessary. Of course, this is expected as formulas are a far more compact form of information than natural language. Nevertheless, natural language is how we talk to each other and how clinicians and patients talk, so the transition must happen.

In exploring the API of ChatGPT, we found that we can use statistical models as a component of ChatGPT. In our work, we use network models that are comprised of chain of regression equations. We are exploring how this can be built into ChatGPT.

How might you work around some of the costs associated with more specific symptoms using ChatGPT?

There are two ways to reduce the number of scenarios needed to train ChatGPT. One is to take advantage of independence structure among the variables. Our models of data provide information on independence of certain variables. For example, in COVID, we know that some symptoms’ contributions to the diagnosis of COVID are independent of other symptoms. Anytime you have independence, then you can reduce the combinations of symptoms needed.

The second method is to use regression equations directly within ChatGPT. The application provides a method for combining regression equations to ChatGPT inferences. We need to combine a chain of regressions, which is a bit more complex, but it seems plausible that this can be done in the private application for ChatGPT.

How can ChatGPT help other efforts to organize natural conversations with patients?

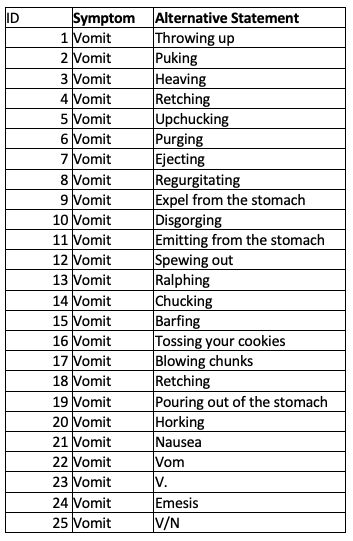

ChatGPT can help efforts to understand patients’ open comments by providing alternative ways of saying the same words/phrases. For example, we can use the public version of ChatGPT to learn words that can map to symptoms and then create a conversational method for patients to report their symptoms in English. Here is my initial attempt to do so for one symptom. Look at how comprehensive the list produced by ChatGPT for the symptom “vomit”:

There are 25 different ways to say, “I vomited.” This does not include misspelled words and Spanish words. But that, too, is available through reverse engineering some parts of ChatGPT. The point is that the public version of ChatGPT provides the elements needed to create our own algorithm for understanding text and producing the recommendation.

We’re hearing a lot about ChatGPT for essay writing. Can Chat GPT be used for math and science?

We are working on a project to enable ChatGPT, or any large language model, to learn from statistics, in particular from regression models and analysis of variance. Some components of this are already available in ChatGPT, but not in sufficient detail. A lot of science is expressed in mathematics. Right now, this is beyond the public version of ChatGPT. So, as a consequence, the public version reports are not detailed and tailored enough to a patient’s needs. The public version does not learn from formulas, and teams of scientists are needed to build formulas and calculators into ChatGPT. When we do so, then the version of ChatGPT that is trained on PubMed can come closer to artificial intelligence helping with the diagnosis or treatment of patients.

To correctly answer patients’ questions, large language models need to be trained on formulas and on meta-analysis of statistical models, the fundamental method, and the language of science. Our work in this area is focused on translating our recent formula-based model for the diagnosis of COVID into ChatGPT. We are also working on creating a similar tool for the selection of antidepressants.

About

Mission

At IDIA we turn problems of social anxiety to digital innovation with social resonance. We harness the power of different perspectives and ways of thinking. We solve complex problems that demand creativity, transdisciplinarity, and a sustained passion for digital innovation for good. From AI Innovation and Machine Learning, to Responsible AI, Autonomous and Collaborative Robotics, Cyberphysical systems and Cybersecurity, Advanced Transportation Systems, Integrated intelligence, Smart Cities, Quantum Computing, and more, we provide leadership in the technologies of the future. We elevate diversity, equity, and inclusion. We build an innovation community and answer the unique challenges of our time for the betterment of our society, our nation, and humankind.

The institute supports transdisciplinary work across three themes:

Technologies

Conceptualizing and designing novel algorithms, techniques, and technologies

Systems

Developing and deploying computing systems to advance fields as diverse as finance, education, built infrastructure, science, economics, agriculture, health, transportation, entertainment, national security, and social justice

Digital Society

Engaging in critical reflection that examines the implications of digital innovation to ensure that innovators are sensitive to designing and innovating responsibly, and that key stakeholders – including users, innovators, policy-makers and the public at large – are informed about technology’s social, ethical, political, and economic impacts

Nexus

IDIA is a nexus point for collaborative efforts, connecting organizations, people, problems, ideas, and technical approaches with a rich program of transdisciplinary and translational research, scholarship, and workforce development activities and mechanisms for maximum impact that is more than the sum of its parts. The institute was launched in 2022. Learn more about its history here.

IDIA Leadership

Amarda Shehu, PhD

Associate Vice President of Research

Institute for Digital InnovAtion

Kamaljeet Sanghera, PhD

Executive Director

Institute for Digital InnovAtion

Ziniya Zahedi, PhD

Assistant Director for Operations

Institute for Digital InnovAtion (IDIA)

Gilbert R Harris

Data Analyst

Institute for Digital InnovAtion (IDIA)

Internal Advisory Board

Karen A Bell

Grant Writer & Proposal Specialist

School of Business

Patrick Gillivet

Associate Dean of Research

College of Science

Deborah Goodings

Professor & Associate Dean

College of Engineering and Computing

Anthony Eamonn Kelly

Associate Dean for Research

CEHD Office of Research Development

Naoru Koizumi

Associate Dean of Research

Schar School of Policy and Government

Alison Landberg

Professor & Director

Center for Humanities Research

Holly Park

Interim Assistant Dean for Research

College of Health and Human Services

Arthur Pyster

Associate Dean for Research

College of Engineering and Computing

Michele Schwietz

Associate Dean for Research

College of Humanities and Social Science

Web Links

Programs

IDIA P3 Faculty Fellowship

Source: IDIA website

Dear Colleagues,

With this Dear Colleagues Letter (DCL), the Institute of Digital InnovAtion (IDIA) solicits applications for IDIA Public-Private-Partnership (P3) Faculty Fellows.

Program Description

The newly launched IDIA P3 Faculty Fellows Program seeks to stimulate research collaborations and partnerships between George Mason University and industry partners on impactful societal problems and cutting-edge digital solutions of consequence to the local, regional, or national economy. The program directly supports the growth of George Mason University’s digital innovation ecosystem and catalyzes a tightly integrated academe-industry community of innovation.

Through this DCL, IDIA charges Mason faculty and researchers with coming together with industry partners into deeply collaborative teams to further scientific and engineering foundations that enable future breakthrough technologies that are directly inspired by and address critical industry needs, as well as broadly benefit our society and constitute digital innovation for good. Competitive applications align with IDIA’s mission of catalyzing cutting-edge computing research that shapes the future of our digital society and promotes equality, well-being, security, and prosperity. More information about IDIA can be found at idia.gmu.edu.

Program Structure

IDIA P3 Faculty Fellows are expected to:

- Identify an industry partner that commits to a deeply collaborative relationship to work jointly on a problem of critical importance to industry and society at large

- Formulate a novel research approach that has high intellectual merit and promises to lead to a breakthrough technology with high broader impact

- Provide evidence of the commitment of the industry partner on joint research and innovation

Industry partners can have a local, regional, national, or international footprint. Evidence of their commitment can include one or more of the following:

- A letter of shared interests and mission by a company representative

- Access to and utilization of company data, hard, and/or software infrastructure

- In-kind commitment by allocating company staff to work jointly with Mason faculty

- Opportunities for Mason undergraduate or graduate students potentially included in the project to further their experience through internships and other industry-housed activities and events

Awards

Awards are expected to be in the range of $50,000 to $75,000 for a duration of at most two years. Awards are restricted to Mason faculty and subject to George Mason University guidelines. Up to five awards are expected, based on the quality of submissions and the availability of funds.

Eligibility Information

Only current employees of George Mason University can apply. This program is limited to tenure-track, tenured, or research-track faculty. An individual may participate in only one proposal. Being a consultant for a company disqualifies that individual from participating in this program through a partnership with that company.

Program Requirements

An end-of-year ‘Come Meet our Fellows’ meeting that celebrates the achievements of IDIA fellows is anticipated, and awardees should make plans for a meaningful and active participation in this event. Fellows are additionally expected to provide an annual report of their progress and accomplishments.

IDIA P3 Faculty Fellows are expected to become an integral part of the IDIA community. As such, they will have the opportunity to: take part in community-building events with other IDIA faculty and predoctoral fellows; collaborate with other fellows to organize and support IDIA-sponsored events, such as seminars, panels, workshops, and tutorials; connect with IDIA’s rich network of academic, government, think tank, and industry partners around digital innovation; and engage with Mason Innovation Exchange and Mason Enterprise.

IDIA P3 Faculty Fellows will additionally have the opportunity to benefit from in-support activities, such as Mason Enterprise ICAPS, Accelerate, and other programs, through which they may further integrate themselves in Mason’s innovation ecosystem and bring their ideas and innovation from the lab to the market.

A successful IDIA P3 Faculty Fellows experience naturally positions fellows for industry-partnered external funding opportunities, which include but are not limited to the NSF GOALI program, the NSF Partnerships for Innovation (PFI) program, the NSF I-Corps program, the NSF Activate Program, and larger-scale NSF programs, such as IUCRC, ERC, STC, the Virginia Catalyst Award program, the NIH Bioengineering Partnerships with Industry, the NCI Academic-Industrial Partnerships for Translation of Technologies for Diagnosis and Treatment, the DTRA Transitions Program, the SBIR/STTR program, the DARPA Embedded Entrepreneurship Initiative, the DARPA Information Innovation program, and more.

Application Details

Applications should adhere to the template provided. Applications that do not follow the provided template will be returned without review.

The application template can be found at: IDIA-P3-Faculty_ApplicationTemplate.

Applications should be compiled into a single pdf, named FirstName-LastName-IDIA-PD.pdf, where FirstName and LastName refer to the first and last names of the applicant, and submitted via email to idia2@gmu.edu. The email subject should be: IDIA P3 Faculty Fellowship Application: First-Name Last-Name.

Applications will be reviewed by a team of interdisciplinary senior researchers and faculty, and awards will be made on a competitive basis.

Timeline and Other Information

Applications should be submitted by the following deadline: March 27, 2023, 11:59PM EST

Two office hours are planned to address questions from interested participants. These will be held remotely, via zoom, on December 09, 2022 (10-11AM) and February 10, 2023 (10-11AM). RSVP for the sessions here.

Any questions before then can be directed to idia2@gmu.edu

Sincerely,

Team IDIA

Amarda Shehu, Associate Vice President of Research, IDIA

Kammy Sanghera, Executive Director, IDIA

IDIA Predoctoral Fellowships

Source: IDIA website

Dear Patriots Letter: IDIA Predoctoral Fellowships

The Institute for Digital InnovAtion (IDIA) invites full-time George Mason University Ph.D. and M.S. students to apply for the competitive IDIA Predoctoral Fellowship Program. An IDIA predoctoral fellowship includes GRA AY stipend, tuition, and healthcare for up to three years.

Student applicants are encouraged to reach out to and form an interdisciplinary team of faculty mentors across the Mason ecosystem and propose an ambitious, interdisciplinary, convergent research agenda that aligns with IDIA’s mission of catalyzing cutting-edge computing research that shapes the future of our digital society and promotes equality, well-being, security, and prosperity. More information about IDIA can be found at idia.gmu.edu.

The application template can be found at: Fellowship Application

Applications that do not follow the above template will be returned without review.

Review Process

Applications will be reviewed by a team of interdisciplinary senior researchers and faculty, and awards will be made on a competitive basis. IDIA anticipates awarding up to five IDIA predoctoral fellowships based on the quality of the applications and availability of funds.

Program Structure

IDIA predoctoral fellows are expected to become an integral part of the IDIA community. As part of IDIA’s Predoctoral Fellow Program, fellows will:

- engage in community-building events with other IDIA fellows and faculty

- collaborate with other fellows to organize and support IDIA-sponsored events, such as seminars, panels, workshops, and tutorials

- engage with IDIA’s network of academic, government, think tank, and industry partners around digital innovation

An end-of-year ‘Come Meet our Fellows’ meeting that celebrates the achievements of our IDIA predoctoral fellows is anticipated, and awardees should make plans for a meaningful and active participation in this event.

Fellows will provide annual reports of their progress and accomplishments. Funding is expected to be renewed on an annual basis, contingent upon a favorable internal review of the annual report and availability of funds and will not exceed three years.

Submission Process

Applications should be compiled into a single pdf, named FirstName-LastName-IDIA-PD.pdf, where FirstName and LastName refer to the first and last names of the student applicant, and submitted via email to idia2@gmu.edu.

The email subject should be: IDIA Predoctoral Fellowship Application: First-Name Last-Name

The document format should be: font style – Arial; font size – 10; page margins – 1 inch

Important Dates

Applications should be submitted by the following deadline:

May 08, 2023, 11:59 PM EDT

Office hours are planned to field questions by interested student applicants and potential faculty mentors. They will be held remotely, via zoom, on:

April 18, 2023, 10:00-11:00 AM, EDT

RSVP here (meeting has ended)

History and Background:

The IDIA Predoctoral Fellowship Program was launched in June 2022. View archive here.

Research Incubation

Latest seed funding call for proposal in 2021

Research Centers

University Centers

Quantum Science and Engineering Center (QSEC)

Criminal Investigations and Network Analysis Center (CINA)

Center for Resilient and Sustainable Communities (C-RASC)

Center for Advancing Human-Machine Partnership (CAHMP)

Center for Adaptive Systems of Brain-Body Interactions (CASBBI)

Departments

Schar School of Policy and Government

Department of Information Sciences and Technology

Computational and DataSciences

Center for Spatial Information Science and Systems

Center for Collision Safety and Analysis

Biomedical Imaging and Devices

Academic Innovation and New Ventures

Mechanical Engineering

School of Integrative Studies

Statistics

Department of Electrical and Computer Engineering

Division of Learning Technologies

Computer Game Design

School of Business

Department of Cyber Security

Computer Science

Labs

Computational Reality, Creativity and Graphics Lab (CraGL)

Cryptographic Engineering Research Group

Intelligence Fusion Lab

Computer and Networking Systems Lab

Mathematics Education Center

The Center for Neural Informatics, Neural Structures, and Neural Plasticity (CN3)

Computational Neuroanatomy Group

Learning Agents Center

Computer Vision and Neural Networks Laboratory

Biomedical Imaging Lab

Digital Society

National Security Institute

Michael V. Hayden Center for Intelligence, Policy, and International Security

Institute for Philosophy and Public Policy (i3p)

Center for the Protection of Intellectual Property

Center for Regional Analysis Center for Regional Analysis

Center for Outreach in Mathematics Professional Learning + Educational Technology

Center for Digital Media Innovation and Diversity (CDMID)

Partner With IDIA

The outcomes generated by the IDIA community are often powered by partnerships with corporate and public sector partners. IDIA staff match the needs of our partners with hundreds of talented faculty and thousands of students, creating opportunities for strategic R&D engagements, start-up creation and growth, student internships and apprenticeships, faculty and student challenge competitions, P-12 student and teacher engagement, sponsored capstone projects, and many others.

Mason has many partnerships focused on digital innovation. Three featured partnership programs include:

Current Partnership Programs

Center for Spatiotemporal Thinking, Computing, and Applications (STC)

The Center for Spatiotemporal Thinking, Computing, and Applications is dedicated to collaborating with agencies and industry to conduct leading spatiotemporal innovations to improve human intelligence, develop new software tools, and build innovative solutions to address 21st-century challenges, such as natural hazards, environmental pollution, and emergency responses.

Commonwealth Cyber Initiative NoVA Node

The Commonwealth Cyber Initiative (CCI), funded by the Virginia General Assembly, created a Commonwealth-wide ecosystem of innovation in cybersecurity and cyber-physical systems (CPS) security. CCI will ensure Virginia is recognized as a global leader in secure CPS and in the digital economy more broadly for decades to come by supporting world-class research at the intersection of data, autonomy, and security; promoting technology commercialization and entrepreneurship; and preparing future generations of innovators and research leaders. George Mason University leads the CCI Northern Virginia Node (NoVa Node) region. Partners in the CCI NoVa Node include institutions of higher education, corporations, small businesses, non-profit organizations, P-12 schools, economic development organizations, and accelerators from 19 counties and are committed to working together to achieve the goals of the CCI.

Mason Innovation Exchange

With the goal of encouraging and celebrating entrepreneurship and innovation across the whole of the university, George Mason University has begun to build out a network of on-campus entrepreneurship-focused collaboration and maker spaces. This network is called the Mason Innovation Exchange (MIX). Programs encourage multi-disciplinary collaboration across all of Mason’s schools and degree programs (e.g. engineering, science, business, liberal arts). It is the place at Mason where groups can come together to develop ideas, research problems, craft solutions, and start companies.

IP and Licensing Opportunities

Mason brings its best research and technologies to the marketplace to change the world for the better. The Office of Technology Transfer evaluates discoveries and conducts market research. We seek partners who share Mason’s goal of working for public good.

Links to licensing opportunities:

BioHealth, Engineering, IT & Cybersecurity, Sustainability, Digital Technology, Other